Vocabulary

- think about: To consider something carefully.

- have to: Must do

- talking about: To discuss a particular topic.

- wait for: To wait until someone comes, or something happens

- for example: As an illustration or instance.

- make up: To invent or create a story

- at a time: Separately; one by one

- in parallel: Done at the same time; simultaneously.

- run in: To arrest person and take them to police station

- all at once: Suddenly; at the same time

- at once: Immediately; without delay

- for instance: As an example.

- in total: Completely; with everything added together.

- work together: To collaborate or cooperate with others to achieve a common goal.

- pay off: To give money to get person to do something; bribe

- in the end: Finally; after a period of time or series of events.

- process: To organize and use data in a computer

- essential: Extremely or most important and necessary

- typically: In a normal or usual way

- instance: An example of something; case

- interact: To talk or do things with each other

- category: Groups of things that are similar in some way

- current: Electricity flowing through wires

- integrate: To combine together; make into one thing

- brand: A mark burned on an animal to show who owns it

- bet: To gamble money to win more money, e.g. on horses

- innovation: Process of creating new ideas or inventions

- corporate: Concerning (usually large) companies

- similar: Nearly the same; alike

- contrast: To compare; to show clear, obvious differences

- edge: An advantage you have over others

- perform: To carry out an action well or successfully

- parallel: To be equal to, or like, something else

- gigantic: Extremely large

- generate: To create or be produced or bring into existence

- result: Something produced through tests or experiments

- performance: Act of doing something

- communicate: To give and exchange information

- pace: Rate of speed at which something moves or happens

- hype: Advertising, writing, or talk to spark interest

- architect: Person who designs and advises on buildings

- text: To send a message by phone or other device

- company: Good feeling from being with someone else

- lot: What happens to a person in life from chance; fate

- margin: Edge of an area

- narrator: Person or character who tells a story

- offer: Price you say you are willing to pay for something

- information: Collection of facts and details about something

- microscopic: Too small to be seen with the eyes

- mixture: Something made by combining two or more things

- boom: Very fast increase in growth or popularity

- chip: To break a small piece off something such as a cup

- step: Movement done as part of a particular dance

- question: To ask for or try to get information

- bandwidth: Data transmission rate over the internet

- fast: In a way that is difficult to move or change

- world: All the humans, events, activities on the earth

- bubble: A small ball of air inside of a liquid

- train: Line of people, animals moving the same direction

- work: The product of some artistic or literary endeavor

- design: To plan in a particular way to fulfill a purpose

- dot: To place small amounts/things in various places

- give: Degree of flexibility in something, a material

- datum: Item of factual information

- dice: To cut food or other things into small pieces

- latency: State of being not yet evident or active

- calculation: Process or result of using mathematics

- interconnect: To join or be joined together (computers/theories)

- wafer: Thin light cookie often accompanying ice cream

- sequentially: In an arranged order

- aw: The sound made when seeing something cute

Get the full experience in the app

Learn anywhere with detailed sentence and usage analysis

01:03

She took a brave step forward, leaving behind her comfort zone to chase her dreams.

Vocabulary

- brave

adj. Having courage

- comfort zone

phr. A familiar situation where one feels safe

Explanation

a brave step is a noun phrase, where brave is an adjective modifying the noun step, meaning "a courageous step".

forward is an adverb modifying step, meaning "ahead".

The whole phrase serves as the object, answering the "what" of took (verb) — she took a brave step forward.

Get the full experience in the app

Look up words anytime with pronunciation, part of speech, and usage

brave

US/brev/

UK/breɪv/

adj.Brave

v.t.To bravely face

A2 Elementary

Get the full experience in the app

Practice speaking anytime and get instant pronunciation feedback

Try this speaking exercise.

Try practicing with this sentence.

80

0

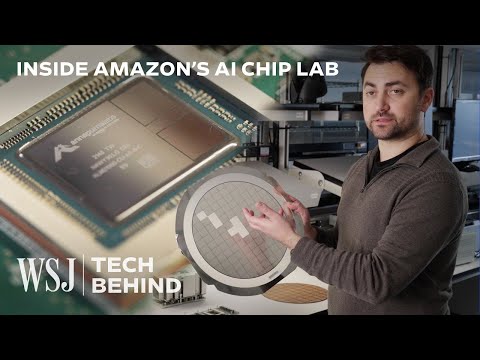

林宜悉 posted on 2024/01/06Ever wondered what goes into making the super-smart AI chips that power our world? This video gives you an amazing peek behind the scenes at how companies like AWS and Nvidia design and build these complex pieces of technology, and you'll pick up some seriously useful advanced vocabulary along the way!

Learn this video on the APP!

The VoiceTube App has more in-depth practice for videos!